Production of memories chips has shifted away from standard models in favour of the extra-powerful versions needed for AI systems. Credit: SeongJoon Cho/Bloomberg/Getty

Video gamers were among the first to grumble when supplies of random access memory (RAM) chips began to run short last year, causing prices to soar. But the ongoing crisis — which has been dubbed RAMmageddon and is expected to linger well into 2027 — is affecting some scientists, as well.

The shortage is driven by the rise of artificial-intelligence systems, which has created a voracious demand for high-speed memory chips. Over the course of 2025, some forms of RAM tripled in price, causing problems for resource-constrained laboratories that already faced barriers to accessing powerful computing tools. The shortage is also pushing researchers to develop more efficient algorithms and hardware, to reduce the amount of memory needed.

“Scientific research increasingly relies on large-scale computing infrastructure,” says Matteo Rinaldi, director of the Institute for NanoSystems Innovation at Northeastern University in Boston, Massachusetts. “And many of these workloads require substantial memory capacity.”

Memory loss

RAM chips help to provide short-term storage for data that are actively in use, allowing a computer’s processor to quickly access the information it needs. AI uses more complex memory chips than those found in personal computers, and surging demand has pushed manufacturers to shift most of their production to the high-capacity ones needed to train AI models.

The result is higher prices for both standard chips and the personal computers that rely on them: memory now accounts for more than one-third of the cost of building a computer, up from about 15% just a few months ago, according to the computer giant HP, in Palo Alto, California.

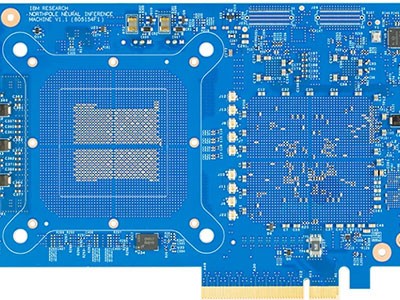

‘Mind-blowing’ IBM chip speeds up AI

Even with the shift in production to high-capacity chips, there is still not enough of the complex memory necessary for training AI to meet demand, says Tom Coughlin, a technology consultant in Atascadero, California. It could take manufacturers 18 months or more to ramp up supply. “Demand is huge, and supply is fixed right now,” he says. “If you’re a smaller player, or a professor at a university, you may or may not be able to use those resources.”

Well-funded laboratories can probably shoulder the added cost, says Rinaldi. But the high prices for memory chips and cloud-based computing infrastructure are likely to intensify the barriers that already make it difficult for researchers in less affluent settings to access resources for AI and other sophisticated computer models.

Cutting back

For example, Pravallika Sree Rayanoothala, a plant pathologist at Centurion University of Technology and Management in Paralakhemundi, India, says the shortage pushed her and her colleagues to reduce the number of crops they study as part of a project that uses models built from large data sets to forecast plant disease risk in agricultural crops.

To further reduce their need to purchase increasingly expensive memory services on the cloud, Rayanoothala and her teammates break up their data into chunks to be modelled separately. It gets the job done, she says, but it slows down data analysis. “The project timeline is increasing, and operational expenses are increasing,” she says. “Slow model development is delaying tools for early disease prediction.”

In South Africa, Abejide Ade-Ibijola says that the RAM shortage has not posed a problem for the nonprofit research institute he founded, GRIT Lab Africa in Johannesburg, South Africa. There, he receives industry support for his work with AI. But researchers in Africa who do not have access to international funding will sometimes travel to wealthier universities to do their research, he says. “They stay for six months, analyse their data, and leave with a PDF,” Ade-Ibijola says. “It’s polarized.”